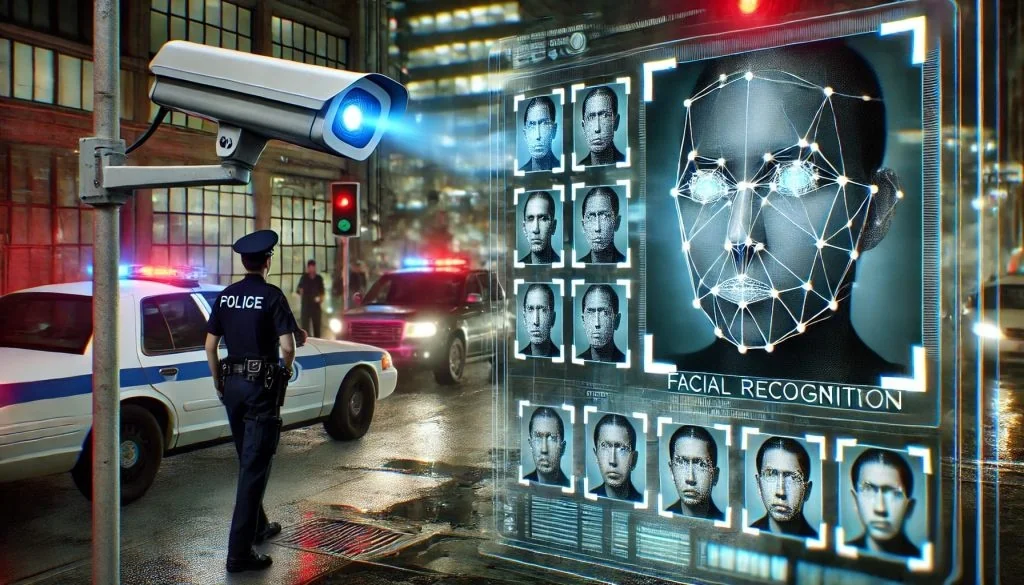

Angela Lipps, a Tennessee grandmother, spent five months in jail because a Clearview AI facial recognition tool falsely matched her to bank fraud charges in North Dakota. This nightmare scenario shows exactly what happens when you rely too heavily on unverified algorithmic matches without proper human oversight or alternative investigation methods.

How a False Match Led to Five Months in Jail

It feels like a plot from a dystopian thriller, but this nightmare just happened to a real person. Law enforcement used Clearview AI to link Lipps to crimes she never committed, treating a digital glitch as absolute proof. She didn’t just lose her freedom; she lost her reputation and time with her family for half a year. The system flagged her based on visual data, yet police acted on it without sufficient verification.

When an AI says “this is the person,” officers often treat that as a lock, not a lead. The assumption seems to be that the machine doesn’t make mistakes. But as Lipps’ ordeal proves, machines definitely do make mistakes. And when those mistakes carry the weight of a five-month jail sentence, the cost is measured in human lives, not just lost data points.

The Critical Failure in Police Protocols

You might be thinking, “Surely they checked the evidence before locking her up?” Apparently not. Reports suggest the facial recognition match was treated as sufficient probable cause. Lipps was arrested and held based on this digital identification alone. It wasn’t until the error was recognized that she was finally released.

This isn’t just a glitch in the code. It’s a systemic failure in how police departments deploy these tools. Lipps told reporters she had no connection to North Dakota and was essentially a ghost in the database until the algorithm decided otherwise. Yet, once that digital match happened, the physical consequences were immediate and severe.

Why Efficiency Can’t Trump Accuracy

The implications of this case are massive. Clearview AI has built its business on scraping billions of images from the internet to make them searchable for law enforcement. This case serves as a brutal, real-world stress test for that business model. If a woman can be misidentified as a suspect 1,000 miles away, what does that mean for the thousands of other people whose faces are in that database?

- Critics are calling for stricter regulations on facial recognition in policing.

- Current deployments lack necessary safeguards to prevent wrongful arrests.

- Efficiency often trumps accuracy in high-pressure police work, creating dangerous blind spots.

Why are we letting an algorithm make the call on someone’s liberty without a human in the loop doing the heavy lifting? The answer seems simple: efficiency often trumps accuracy. But here’s the thing: efficiency doesn’t matter if it’s built on a foundation of error.

What This Means for Your Privacy

Lipps’ story raises a critical question about the future of surveillance technology. Do you want a world where your face is a warrant for arrest, even if the machine gets it wrong? The technology is advancing fast, but the legal and ethical frameworks governing it are lagging dangerously behind. We are handing over the keys to the kingdom to software that can’t distinguish between a lookalike and a criminal.

The aftermath of this case will likely spark a fierce debate in legislative halls and courtrooms across the country. Lawmakers will have to decide if the convenience of AI-assisted policing is worth the risk of wrongful incarceration. For now, Lipps is back home, but her five-month ordeal serves as a grim warning to everyone watching.

The Practitioner’s View on False Positives

From a technical standpoint, this is a classic false positive scenario that experts have seen in facial recognition studies for years. The technology isn’t 100% accurate, especially with low-resolution images. However, the real failure here isn’t just the algorithm; it’s the protocol. If a matching score comes back, it should trigger a deeper investigation, not an immediate arrest warrant.

The fact that the system was treated as infallible by the arresting officers is a breakdown in human oversight. We need to stop treating these tools as the final answer and start treating them strictly as investigative leads that require corroboration before any liberty is taken.

The lesson is clear: we can’t automate justice. Until we prove we can, cases like this shouldn’t just be news stories. They should be red flags waving frantically in the wind.