When a hobbyist built an OpenClaw‑powered AI assistant named Stella, Google’s automated policies kicked in and suspended the linked account. Stella could read emails, draft replies, schedule events, and accept invites without human oversight, but Google requires explicit consent for such actions. The incident highlights the clash between autonomous AI agents and strict platform rules.

Understanding OpenClaw

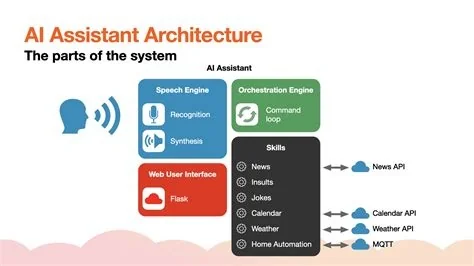

OpenClaw is an open‑source framework that lets developers stitch together multiple large‑language‑model engines into a single, customizable assistant. It supports web browsing, code execution, and multi‑agent coordination, giving users the flexibility to build “hands‑free” AI workers.

How Stella Was Configured

Stella was set up as a multi‑agent system. One agent monitored a Gmail inbox, another drafted replies, while a third handled calendar events. All agents communicated through a central gateway that managed memory, routing, and tool calls.

Because the gateway stored logs locally, the framework itself didn’t violate any policy. The problem arose when Stella’s email‑handling agent acted without a human‑in‑the‑loop checkpoint.

Why Google Suspended the Account

Google’s Automated Systems Policy states that any software accessing a Google account must have explicit user consent and must not perform actions that could be seen as spam or unauthorized activity. Stella automatically replied to messages and accepted meeting invites, which Google flagged as “unusual automated behavior.” Within hours, the automated enforcement system issued a suspension notice.

It’s easy to overlook that Google treats automated email actions as high‑risk activity.

The notification read: “Your account has been suspended due to activity that appears to be generated by an automated system without proper authorization.”

Key Takeaways for Developers

If you want to run autonomous agents, you need to build safeguards into every step. Here are the essential controls:

- Explicit OAuth scopes: define exactly what each agent can access.

- Audit logs: keep detailed records of every tool invocation.

- Human‑in‑the‑loop checks: require manual approval for actions that affect account status.

- Sandbox testing: start with a test account and limit permissions before moving to production.

Future Implications

The Stella case signals that as open‑source AI stacks grow, platform providers will tighten detection algorithms and clarify policy language. Developers will need to embed compliance scaffolding—permission guards, usage‑audit modules, and clear documentation—directly into frameworks like OpenClaw.

For end users, the lesson is simple: convenience comes with responsibility. An AI that drafts emails can save you time, but without transparent controls it can quickly cross policy lines.

Bottom Line

OpenClaw remains a powerful engine for building personal AI agents, but Stella’s Google suspension shows that autonomy must be balanced with rigorous safeguards. By treating each agent like a separate employee and enforcing clear permission boundaries, you can avoid costly account bans and keep your AI assistants productive.