Nvidia is set to deliver a record‑breaking Q4, projecting revenue between $63.7 billion and $66.3 billion while unveiling the next‑gen Vera Rubin platform that promises up to five‑times the AI training performance of its predecessor. You’ll also see how recent Chinese import clearance for the H200 chip and fresh equity stakes in OpenAI could supercharge Nvidia’s AI leadership.

Q4 Revenue and Earnings Outlook

The company expects revenue to land between $63.7 billion and $66.3 billion, comfortably topping Wall Street’s median estimate. Earnings per share are projected at $1.50, a sizable jump from last year’s $0.89. If you’re tracking Nvidia’s growth trajectory, these figures signal a continuation of its 13‑quarter streak of beating revenue forecasts.

Projected Revenue Growth

Revenue guidance reflects a 60 % year‑over‑year increase, driven by data‑center demand and the upcoming Rubin rollout. The outlook also cushions potential headwinds from tighter export controls.

Earnings Per Share Forecast

Analysts forecast an EPS of $1.50, underscoring Nvidia’s strong profit margins. The guidance aligns with the company’s confidence in its AI‑centric product pipeline.

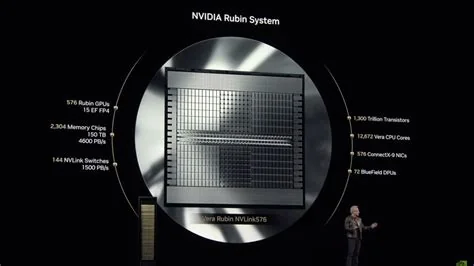

Vera Rubin Platform Boosts AI Performance

Rubin, Nvidia’s latest GPU architecture, is engineered to deliver up to five‑times the training throughput of the Blackwell generation. The platform is slated to reach partners in the second half of the year, giving developers a powerful new toolset.

Five‑Times Training Speed

Benchmarks suggest Rubin can cut model‑training cycles by weeks, translating into faster time‑to‑market for AI solutions. This performance uplift could reshape how enterprises allocate compute budgets.

Product Rollout Timeline

Rubin‑based products will begin shipping in the latter half of the year, positioning Nvidia to capture early demand from cloud providers and enterprise AI teams.

China Approval Unlocks AI Chip Market

Chinese regulators recently granted import approval for Nvidia’s H200 AI chip, clearing a major hurdle for the company’s presence in the world’s largest AI market. The approval follows U.S. export clearance and is expected to revive a pipeline that had stalled.

H200 Chip Import Clearance

The H200 approval came after a high‑profile visit from CEO Jensen Huang, allowing hundreds of thousands of units to enter China. Market participants reported a premium of roughly 50 % on secondary‑hand H200 servers.

Impact on Sales Pipeline

While initial shipments may focus on research‑only usage, the clearance reopens a critical growth channel that could offset pressures from other regions.

Strategic Partnerships with OpenAI and Meta

Nvidia is finalizing a $30 billion equity investment in OpenAI, deepening its stake in the generative‑AI arena. Simultaneously, a long‑term AI‑infrastructure partnership with Meta aims to advance large‑scale AI systems.

Equity Investment in OpenAI

The cash infusion replaces an earlier infrastructure‑only deal, tying Nvidia’s capital to OpenAI’s broader funding round and aligning both firms on future model development.

AI Infrastructure Deal with Meta

Under the Meta agreement, Nvidia will provide GPU‑accelerated infrastructure to power Meta’s next wave of AI services, reinforcing Nvidia’s role as the backbone of large‑scale AI workloads.

What This Means for AI Engineers

From a practitioner’s perspective, Rubin’s performance boost means you can experiment with larger architectures without exploding compute budgets. The OpenAI investment ensures Nvidia’s roadmap stays synced with cutting‑edge language models, while the China approval adds a vital market foothold.

Market Reaction and Outlook

Following the latest guidance, Nvidia shares edged up 0.68 % in regular trading and added a modest 0.036 % in after‑hours. The stock shows a positive trend across short‑, medium‑, and long‑term frames, though valuation metrics suggest a cautious read.

All eyes will be on the upcoming earnings call. If Nvidia sustains its record‑breaking streak, delivers on Rubin’s five‑times claim, and translates China’s clearance into a steady chip pipeline, it could cement its dominance in the AI compute landscape.