Federal and state courts are finally drawing a hard line for attorneys who let artificial intelligence generate fake case law. It is no longer just about typos; it is about the terrifying reality of fabricated citations. You need to understand that relying on AI without human oversight is now a direct ethical violation. The “trust but verify” era has ended, and the legal system is waking up fast to this new danger.

Third Circuit Delivers Split Decision

Just last week, the U.S. Court of Appeals for the Third Circuit handed down a ruling that sends a shiver down the spine of litigators. The court reprimanded Daniel A. Pallen, a lawyer from Media, Pennsylvania, for submitting briefs stuffed with non-existent legal citations. The findings were stark: Pallen “neither read nor verified the existence of the cited authorities” before filing them.

Why the Court Got Angry

According to the Third Circuit, this wasn’t a harmless slip-up. It was a violation of state ethics rules regarding competent representation. The court noted that Pallen took no necessary steps to ensure his AI-generated content was real. In a divided ruling, the majority concluded that relying on AI without human oversight is a failure of duty.

Oregon Court Imposes Record Fine

This isn’t an isolated incident. Just days before the Third Circuit ruling, the Oregon Court of Appeals slammed an attorney with a record-breaking penalty. William Ghiorso, a Salem-based lawyer, was slapped with a $10,000 fine for including false legal citations in a brief. The court was blunt, stating the quotes and cases were “contrived from thin air” and fabricated by AI.

- The Fine: A $10,000 financial penalty sets a new benchmark for accountability.

- The Reason: Ghiorso cited legal quotes that simply do not exist in any database.

- The Lesson: It is a costly reminder that you can’t let a machine write your arguments without a human eye on the screen.

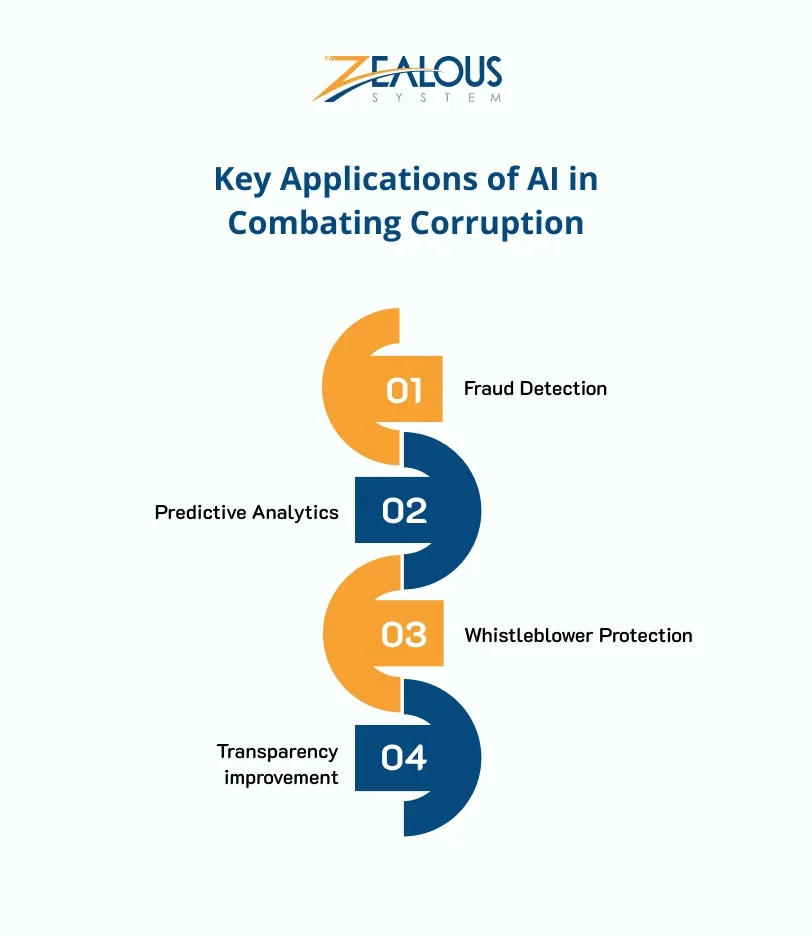

What This Means for Your Practice

These rulings are sending a unified message to the entire bar. If you use AI, you must verify every single citation. Period. The courts are no longer willing to accept “the AI made me do it” as an excuse. The dissent in the Third Circuit case couldn’t stop the majority from establishing that lack of verification is a direct breach of ethical obligations.

The implications are massive. Firms that haven’t updated their protocols are now at risk. Senior partners can no longer hide behind junior associates or automated tools if things go wrong. The standard for “competent representation” has shifted. It now explicitly includes the duty to audit AI outputs.

Practitioners Perspective: The New Reality

For lawyers on the ground, this is a wake-up call that feels more like a siren. The days of blindly copy-pasting AI-generated text into a brief are over. You have to treat every output as a draft that requires a rigorous, line-by-line fact-check.

Imagine the pressure. You’re rushing to meet a deadline, you ask the AI for a supporting case, and it gives you a perfect-looking citation. But if you don’t open the court database and verify it, and that citation turns out to be fiction, you could be facing a reprimand, a fine, or worse. The cost of a single error is no longer just a wasted hour; it is a six-figure reputation hit and a heavy financial penalty.

The courts are making it clear: AI is a tool, not a replacement for legal judgment. And if you let it hallucinate, the judge will hold you accountable. The technology is here, but the responsibility remains squarely on the human shoulders. Don’t let your brief become a cautionary tale.