OpenAI’s recent agreement with the US Department of Defense has sparked controversy, with many ChatGPT users rushing to cancel their subscriptions. You’re probably wondering what this deal means for OpenAI and its users. The partnership allows OpenAI’s artificial intelligence models to be used on classified government networks, raising concerns about AI ethics and data privacy.

What’s Behind the Deal?

The deal includes safeguards and “guardrails” designed to prevent misuse, such as restrictions around fully autonomous weapons and mass surveillance. However, critics argue that the language permitting use for “all lawful purposes” raises questions about how AI systems could ultimately be deployed. You might be thinking, what exactly does this mean for the future of AI?

User Backlash

As news of the deal spread, online communities dedicated to artificial intelligence and ChatGPT filled with users expressing dissatisfaction. Some described the partnership as a breach of trust, while others shared step-by-step guides on how to delete accounts and export personal data. The online reaction has been swift, with posts using phrases such as “no ethics” and “selling out” trending in AI-focused online communities.

OpenAI’s Stance

OpenAI emphasizes that its AI models will not be used to develop autonomous weapons. The company has stated that the agreement includes clear red lines and additional safety measures. But for many users, this isn’t enough. They’re worried that OpenAI is compromising its values and putting its technology at risk of being used for nefarious purposes.

The Bigger Picture

The controversy has also intensified comparisons between OpenAI and its competitor Anthropic. Some have noted that Anthropic declined to proceed with certain government arrangements over concerns related to mass surveillance and fully autonomous weapons systems. This has led some to question whether OpenAI is prioritizing profits over principles.

What’s Next?

The company has faced mounting pressure to reconsider its deal with the Pentagon, but it’s unclear whether it will back down. As the situation unfolds, you can expect to see rival AI platforms capitalizing on the controversy. For example, Anthropic’s chatbot Claude has climbed to the top of the Apple App Store rankings in several regions.

Implications for the AI Industry

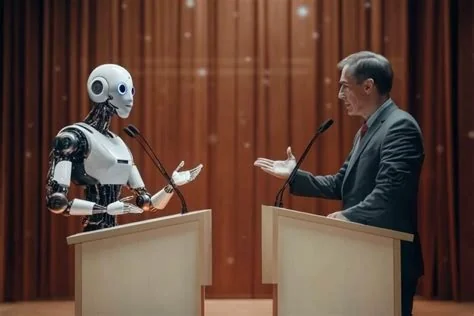

The OpenAI-Pentagon deal highlights a broader divide within the artificial intelligence sector over whether commercial AI models should be integrated into military applications. As AI technology continues to evolve, companies must prioritize transparency, accountability, and ethics in their decision-making. The question is, can companies like OpenAI balance their values with the demands of government contracts, or will they be forced to choose between profits and principles?

- The AI industry is at a crossroads, and the decisions made today will have far-reaching consequences for the future of AI development and deployment.

- It’s essential to consider the potential consequences of AI being used in military applications.

- You should ask: what are the implications of this deal, and how can we ensure that AI systems are developed and deployed in a way that prioritizes human values and safety?

As we consider the implications of this deal, we must grapple with these questions and consider the future of AI development and deployment.