The rapid evolution of artificial intelligence (AI) has brought about numerous benefits, but it’s also raised significant concerns about its potential misuse. You’re likely worried about AI being used to produce harmful content, and rightly so – 68% of people share your concern. These concerns aren’t unfounded, as researchers have identified several risks associated with AI, including privacy breaches, biased decision-making, and job displacement.

Understanding AI and Its Risks

So, what exactly falls under the term AI? It’s a broad family of technologies that enable machines to learn, reason, and make decisions. The most common types of AI include machine learning (ML), deep learning (DL), and generative AI (GenAI). These technologies have the potential to shape different kinds of risks, from subtle, data-driven bias in ML to transparency issues in DL.

Micro-Level Risks: How AI Affects You Directly

At the micro level, AI risks play out in everyday life, affecting individuals directly. These risks include unfair recommendations, privacy leaks, and opaque outcomes. For instance, ML algorithms can lead to discriminatory scoring, false positives/negatives, and personalization “creep.” DL, on the other hand, can result in misrecognition, edge-case failures, and adversarial fragility. GenAI can produce hallucinations, fabricated citations, and authorship ambiguity. You might have experienced some of these issues firsthand, and it’s essential to understand how they work.

Meso-Level Risks: Industry-Wide Problems

But what about the meso level, where risks emerge at the scale of industries and organizations? Here, the concerns are more pronounced. Researchers have identified potential vulnerabilities in embodied large language models (LLMs) at the action level, beyond semantic manipulation. Can attackers craft prompts with benign-looking actions that yet lead to harmful physical consequences? This question highlights the need for further research into the risks associated with AI.

Mitigating AI Risks: A Comprehensive Framework

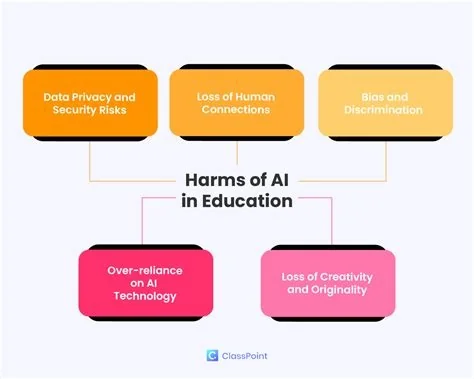

As AI becomes increasingly integrated into our lives, it’s essential to address the real risks of human over-reliance on AI. The problem isn’t that AI is wrong, but that its confidence discourages disagreement. This can make it harder for you to spot gaps in AI-driven decision-making. To mitigate these risks, it’s crucial to develop a comprehensive risk framework that takes into account the various layers of AI risks.

Moving Forward: AI Safety and Risk Management

As AI continues to evolve, it’s essential for organizations and individuals to be aware of the potential risks and take steps to mitigate them. This includes developing robust risk management strategies, investing in AI safety research, and promoting transparency and accountability in AI-driven decision-making. By working together, we can ensure that AI is developed and deployed in a responsible and beneficial manner. But how can we balance the benefits of AI with the need to mitigate its risks?

It’s up to us to prioritize AI safety and develop more sophisticated risk management strategies. The concerns over AI manipulation for harmful purposes are growing, and it’s essential to address them head-on. By understanding the risks associated with AI and taking proactive steps to mitigate them, we can ensure that this powerful technology is used for the greater good.

- Be aware of the potential risks associated with AI

- Develop robust risk management strategies

- Invest in AI safety research

- Promote transparency and accountability in AI-driven decision-making

By taking these steps, you can help ensure that AI is developed and deployed in a responsible and beneficial manner.