Governments are rapidly deploying AI to root out corruption, but you face a critical dilemma: how do you use these powerful tools without triggering mass surveillance fears? The latest research shows that targeted transformer models can spot anomalies in procurement data, yet the fear of “whole-of-internet” monitoring looms large. You need to understand how these systems work, where the risks lie, and why strict ethical guardrails are the only way to maintain public trust while fighting graft effectively.

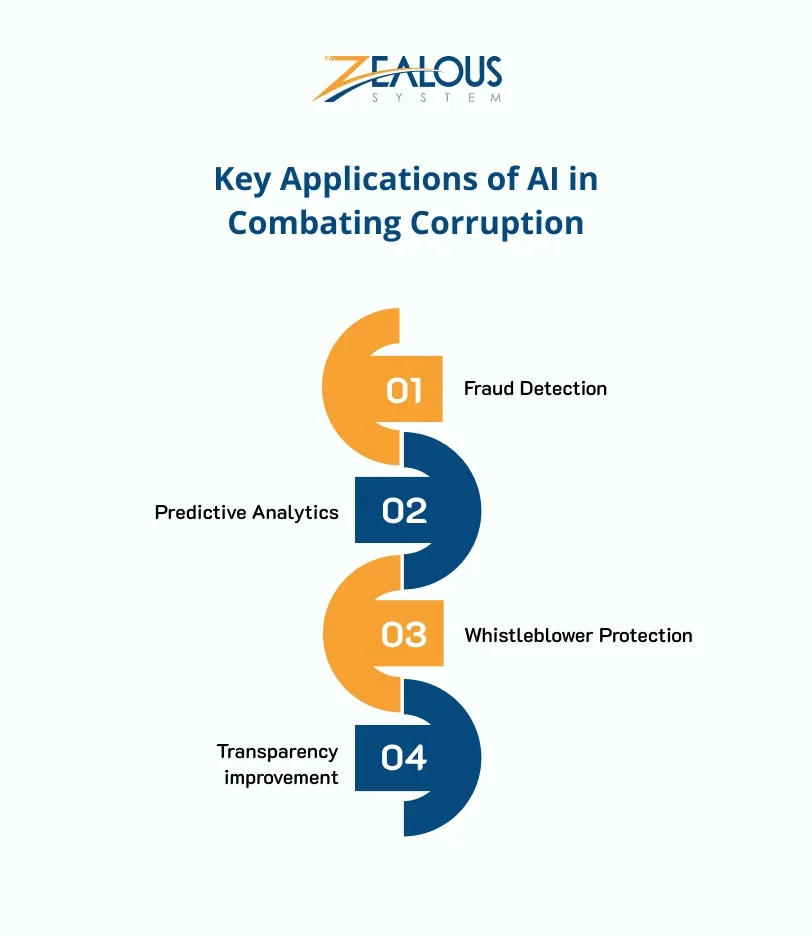

Targeted AI for Detecting Procurement Fraud

Spotting a bribe in a mountain of data isn’t easy for human auditors, but AI changes the game. Recent studies highlight how transformer-based architectures can scan complex technical specifications to flag suspicious anomalies that might slip through the cracks. This isn’t about replacing your human team; it’s about giving them a sharper pair of eyes to catch errors and irregularities in real-time.

Why Specificity Matters

The key to success lies in narrowing the scope. Instead of scanning the entire internet, agencies are finding success by focusing specifically on technical procurement documents. This targeted approach allows you to detect corruption risks without casting a wide net that could infringe on citizen privacy. When you limit the data collection to specific contracts, you sidestep the broad surveillance pitfalls that critics often warn about.

The Surveillance Dilemma You Can’t Ignore

While the technology offers promise, the narrative isn’t all efficiency. There is a genuine fear that government-funded AI tools could be used for “whole-of-internet surveillance and censorship” rather than just anti-corruption efforts. If you aren’t careful, the same infrastructure designed to catch a thief could end up watching everyone.

The Risk of Overreach

Reports suggest that without strict guardrails, the push for transparency could tip the scales toward control. The challenge for leaders isn’t just technical; it’s deeply ethical. You must ensure that the algorithms used to find corruption aren’t the very same ones used to silence dissent. If the scope of data collection isn’t strictly limited, the tool becomes a weapon against the very people it’s meant to serve.

Building a Secure and Ethical Framework

Several vendors are now offering roadmaps to help agencies scale their AI investments safely. These platforms emphasize that security and mission alignment are non-negotiable. They provide a structured approach to ensure that technology serves the public good without compromising civil liberties.

What You Need to Do Now

For practitioners building these systems, the pressure is immense. You’re not just writing code; you’re defining the boundaries of accountability. The most effective strategy isn’t just about having the best model; it’s about implementing the most rigorous ethical framework to govern its use. If you can isolate the detection of corruption risks to specific procurement data, you can build a system that is both powerful and accountable.

The question remains: will you follow strict guidelines, or will you ignore them in favor of broader capabilities? The answer lies in your commitment to building a system that respects privacy while delivering results.